Quantum Programming: Bridging the Abstract and the Applied in the New Computational Epoch

Abstract

Abstract

Quantum computing represents a paradigm shift in computational capability, leveraging the counterintuitive principles of quantum mechanics to solve classes of problems that are intractable for even the most powerful classical supercomputers. This research report provides a comprehensive academic analysis of quantum programming, the discipline dedicated to harnessing this nascent power. It delineates the fundamental principles of quantum information processing, contrasts them with classical computing paradigms, and explores the evolving landscape of quantum programming models and languages. The report offers a detailed examination of seminal quantum algorithms that underpin the field’s transformative potential, from cryptography to molecular simulation. Furthermore, it confronts the significant challenges that currently bound the practicality of quantum computation, including decoherence, error correction, and hardware limitations, while also surveying the ambitious roadmaps laid out by industry leaders. The synthesis presented herein concludes that quantum programming is not merely an extension of classical computer science but a foundational new field, demanding a convergent expertise in physics, mathematics, and computer science to unlock its full potential for tackling humanity’s most complex scientific and commercial problems.

Introduction to the Quantum Computational Paradigm

The journey from classical to quantum computing is a transition from the deterministic world of binary logic to the probabilistic and multidimensional realm of quantum mechanics. Classical computers, the engines of the digital age, process information as bits that exist in a definitive state of either zero or one. Every computation, from the simplest addition to the most complex neural network training, is ultimately a sequence of manipulations of these binary states. In stark contrast, quantum computers operate on quantum bits, or qubits, which exploit the phenomena of superposition and entanglement. A qubit can be in a state of zero, one, or any quantum superposition of both simultaneously. This fundamental property enables a quantum computer with a set of qubits to process a vast number of possibilities concurrently. When qubits become entangled, a profound correlation links their fates; the state of one qubit cannot be described independently of the state of the others, regardless of the physical distance separating them. This entanglement is a critical resource that enables the coordinated, massively parallel computations that define quantum advantage.

The potential of this new paradigm is not merely theoretical; it promises tangible revolutions across multiple disciplines. Quantum computing holds the key to solving specific problems with exponential speedups over the best-known classical algorithms. This capability could redefine the boundaries of scientific discovery and industrial optimization. For instance, the simulation of quantum mechanical systems, such as complex molecules for drug design or novel materials for batteries, is notoriously difficult for classical computers due to the exponential growth of parameters required. A quantum computer, by its very nature, is adept at modeling such systems, offering a path to accelerate the development of new pharmaceuticals, more efficient catalysts, and next-generation energy storage solutions. Furthermore, in the realm of optimization, quantum algorithms could revolutionize logistics and supply chains by finding optimal routes and schedules from a staggering number of combinations in a fraction of the time currently required. Even the field of artificial intelligence stands to be transformed, as quantum parallelism could rapidly train machine learning models on enormous, complex datasets. The seismic implications extend to cybersecurity, where Shor’s algorithm threatens to render current public-key encryption obsolete, thereby spurring the parallel field of post-quantum cryptography. These applications are not distant science fiction; they are the driving force behind global investments from corporations and governments that recognize quantum computing as a strategic technology for the future.

The Architectonics of Quantum Information: Qubits, Gates, and Circuits

At the heart of quantum programming lies the qubit, the fundamental unit of quantum information. The behavior of a qubit is governed by the mathematics of quantum state vectors in a two-dimensional Hilbert space. Unlike a classical bit, the state of a single qubit is described by a vector that is a linear combination of the basis states, zero and one. When measured, a qubit collapses from its superposition into one of the definite basis states, with the probability of each outcome determined by the squared magnitudes of these complex probability amplitudes. This interplay between superposition during computation and probabilistic collapse upon measurement is a cornerstone of quantum programming logic. The manipulation of these probability amplitudes through quantum gates to constructively reinforce pathways to correct answers and destructively cancel pathways to wrong answers is the essence of quantum algorithm design. This process, known as quantum interference, is what allows quantum algorithms to solve problems more efficiently than their classical counterparts.

Quantum computations are physically instantiated through quantum circuits, which are sequences of quantum gates applied to a set of qubits. These gates are unitary transformations that evolve the state of the qubits reversibly. Basic single-qubit gates include the Pauli-X gate, which acts as a quantum analogue of the NOT gate by flipping the basis states, and the Hadamard gate, which is crucial for creating superposition states from a definite initial state. Multi-qubit gates form the connective tissue of quantum circuits, creating entanglement and enabling complex interactions. The controlled-NOT gate is a paradigmatic two-qubit gate; it flips a target qubit if and only if a control qubit is in the state one, thereby establishing a correlation between them. The Toffoli gate, or controlled-controlled-NOT, acts as a reversible AND gate, further illustrating how universal classical logic can be implemented within the quantum framework. A set of gates, such as Hadamard, phase, and CNOT, can be combined to form a universal gate set, meaning any quantum computation can be approximated to an arbitrary level of accuracy using only these gates. The quantum circuit model, which chains these gates together, has become the predominant abstraction for quantum algorithm development, providing a structured framework for programmers to design and reason about quantum algorithms before they are executed on physical hardware.

The Ecosystem of Quantum Programming: Models, Languages, and Frameworks

The landscape of quantum programming is diverse, encompassing several computational models tailored to different hardware approaches and algorithmic strategies. The gate model, or quantum circuit model, is the most widely adopted paradigm. In this model, computation is expressed as a sequence of quantum logic gates applied to a register of qubits, culminating in a measurement. This approach is direct and intuitive for programmers familiar with the digital logic of classical circuits. An alternative model is measurement-based quantum computing, which starts with a highly entangled cluster state and performs computation through a sequence of adaptive measurements on individual qubits. While computationally equivalent to the gate model, it offers a different perspective on the resource requirements for quantum computation. A third model, adiabatic quantum computing, formulates computation as the gradual evolution of a simple initial quantum system into a complex final system whose ground state encodes the solution to an optimization problem. This model is the foundation for quantum annealers, which are specialized devices designed to tackle specific combinatorial optimization problems.

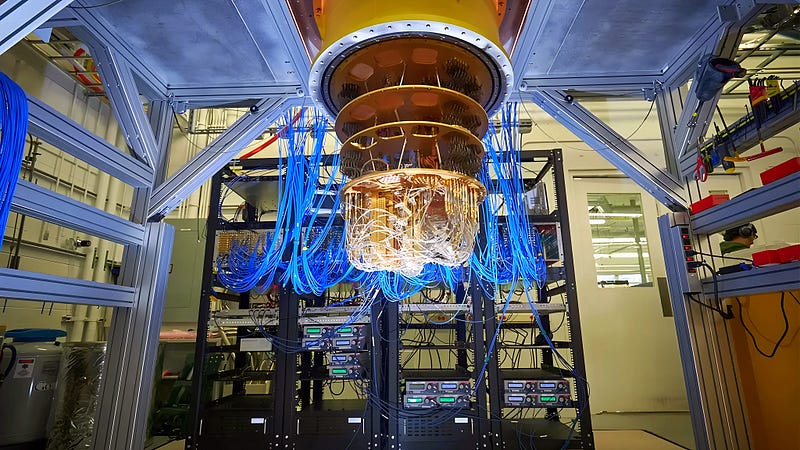

To translate these abstract models into executable code, a new generation of programming languages and software development kits has emerged. Qiskit, an open-source framework developed by IBM and built using Python, has gained widespread traction in both academic and industrial circles. It allows programmers to construct quantum circuits, simulate them on classical hardware, and run them on real quantum processors via the cloud. Similarly, Google’s Cirq framework provides tools for writing, manipulating, and optimizing quantum circuits intended to run on their superconducting quantum processors. Microsoft has taken a different approach by developing Q#, a standalone, domain-specific programming language with a native type system for qubits and operators, integrated with a dedicated simulator. Other notable platforms include Amazon Braket, which offers a managed service for experimenting with quantum computers from multiple hardware providers, and Rigetti’s Forest SDK for their superconducting qubit technology. These frameworks typically comprise a full software stack, including high-level language interfaces, compilers that translate algorithms into native gate operations, and optimizers that tailor circuits to the specific topological constraints and noise profiles of target hardware. This rapidly maturing software ecosystem is crucial for abstracting away the underlying physical complexity and making quantum computing accessible to a broader community of developers and researchers.

Foundational Algorithms and Prospective Applications

The theoretical power of quantum computing is concretely demonstrated by a collection of groundbreaking algorithms that offer profound speedups. Grover’s search algorithm provides a quadratic speedup for searching an unstructured database, reducing the time complexity from order N for a classical linear search to order square root of N. This constitutes a significant advantage for large N and has implications for general problem-solving and data search tasks. An even more dramatic exponential speedup is achieved by Shor’s algorithm for integer factorization. The difficulty of factoring large integers is the bedrock of widely used cryptographic systems like RSA. Shor’s algorithm, by leveraging the quantum Fourier transform, can factor integers in polynomial time, thereby posing a potential threat to current cryptographic protocols and galvanizing research into quantum-resistant cryptography. Beyond these famous examples, the quantum Fourier transform serves as a key subroutine in many other algorithms, including those for solving the hidden subgroup problem, which generalizes both period-finding and factoring.

The current era of quantum computing is defined as the Noisy Intermediate-Scale Quantum era, characterized by devices that contain dozens to hundreds of qubits but lack full error correction. In this context, a family of algorithms known as variational quantum algorithms has become particularly important. These hybrid quantum-classical algorithms delegate a portion of the computation, typically an optimization loop, to a classical computer, while using the quantum processor to evaluate a cost function, often for quantum chemistry or optimization problems. The variational quantum eigensolver is a prominent VQA designed to find the ground state energy of molecular systems, a task of immense value in drug discovery and materials science. For instance, researchers at IBM have used techniques like sample-based quantum diagonalization to simulate the ground state energy of complex molecules like a 77-qubit iron-sulfur cluster, a problem beyond the scale of exact classical methods. Beyond scientific simulation, practical quantum applications are being piloted in diverse fields. In finance, quantum algorithms are being explored for portfolio optimization and risk modeling. In logistics, they hold promise for solving complex route and traffic optimization problems in real-time. Even in machine learning, quantum approaches are being investigated to process complex datasets and improve model training. As hardware fidelity improves, these algorithmic blueprints are gradually being transformed into tools for practical advantage.

Formidable Challenges and the Path to Fault Tolerance

The extraordinary potential of quantum computing is matched by the formidable engineering and theoretical challenges that stand in the way of its full realization. The most significant of these is the inherent fragility of quantum information. Qubits are exquisitely sensitive to their environment; any unintended interaction with the outside world, through thermal fluctuations, electromagnetic radiation, or material defects, can cause a loss of quantum coherence, a process known as decoherence. This introduces errors into the computation and can rapidly destroy the delicate superpositions and entanglements that power quantum algorithms. The rate of these errors in current NISQ-era hardware (current quantum computers with a limited number of qubits (tens to a few hundred) and significant noise) is orders of magnitude higher than in classical transistors, severely limiting the depth and complexity of circuits that can be reliably executed. This noise susceptibility necessitates the development of sophisticated quantum error correction codes. Unlike classical error correction, which can simply copy and check bits, quantum error correction must protect information without being able to copy it, due to the no-cloning theorem. QEC works by encoding a single logical qubit into a highly entangled state of many physical qubits, creating redundancy that allows errors to be detected and corrected without directly measuring the quantum information itself. Pioneering codes like the surface code have been developed for this purpose, but they come with a massive resource overhead; current estimates suggest that thousands of physical qubits may be required to create a single, stable logical qubit. Demonstrations of error correction, such as recent experiments by Google and Quantinuum showing a reduction in error rates by increasing the number of physical qubits per logical qubit, are critical milestones on the path to fault-tolerant quantum computation.

Beyond error correction, the field grapples with the dual challenges of scalability and talent shortage. Scaling quantum processors from hundreds to millions of qubits, as required for broadly impactful applications, presents immense difficulties in control electronics, chip design, and cryogenic systems. Companies are exploring modular architectures, such as IBM’s Quantum System Two, which networks multiple smaller processors to achieve scale. Simultaneously, a significant bottleneck is the scarcity of a skilled workforce proficient in the convergent disciplines of quantum mechanics, computer science, and electrical engineering. This talent gap threatens to slow progress, prompting universities and companies to launch new educational programs and training initiatives. In response to these challenges, major technology companies have published detailed roadmaps outlining their strategic paths forward. IBM projects achieving quantum advantage, where a quantum computer outperforms a classical one on a commercially relevant problem by 2026, with a focus on applications in chemistry and materials science, and aims for fault-tolerant systems by the end of the decade. Google has articulated a goal of building a useful, error-corrected quantum computer by 2029. Other players, including Microsoft, IonQ, and Quantinuum, are pursuing diverse technological paths — from topological qubits to trapped ions — each with its own timeline and set of milestones. These roadmaps, while ambitious, reflect a collective belief that the engineering problems of quantum computing, while profound, are ultimately solvable.

Conclusion

Quantum programming is the pivotal discipline at the frontier of a computational revolution. It represents a fundamental re-imagining of how information is processed and problems are solved, drawing from the deepest principles of physics to extend the reach of human computation. This report has traversed the conceptual foundations of the quantum paradigm, the architectural principles of qubits and circuits, the practical ecosystem of programming frameworks, and the transformative potential of core quantum algorithms. It has also confronted the stark realities of decoherence, error, and scalability that currently define the Noisy Intermediate-Scale Quantum era. The journey toward fault-tolerant quantum computing is a monumental scientific and engineering undertaking, akin to the early days of classical computing, requiring sustained investment, interdisciplinary collaboration, and the cultivation of a new generation of quantum-literate scientists and engineers. The aggressive roadmaps of industry leaders and the significant investments from governments worldwide signal a firm consensus that this journey is not only worthwhile but essential. Quantum computing will not replace classical computers; rather, it will augment them, creating powerful hybrid systems capable of tackling the world’s most complex and computationally intractable problems in science, industry, and society. The era of quantum utility is dawning, and the work of quantum programmers today is laying the groundwork for the discoveries of tomorrow.