Computational Mathematics in Modern Military Theory

Computational mathematics — the intersection of advanced mathematical models, algorithmic problem-solving, and high-performance computing — has evolved from a tactical tool to a foundational pillar of modern military theory.

Introduction

The character of war is eternally bound to the character of the science of its age. In the twenty-first century, science is computational, and the character of conflict is increasingly defined by the silent, relentless logic of algorithms. Computational mathematics — the intersection of advanced mathematical models, algorithmic problem-solving, and high-performance computing — has evolved from a tactical tool to a foundational pillar of modern military theory. Its influence permeates every level of strategy, from the instantaneous calculation of artillery trajectories to the grand strategic simulations of great-power competition. This transformation, however, is not merely technical; it is profoundly theoretical, forcing a re-examination of the very principles of warfare articulated by Clausewitz, Sun Tzu, and Jomini. The integration of computational models promises to cut through the fog of war, yet it simultaneously introduces new frictions and biases, challenging our deepest assumptions about the nature of conflict, the role of human judgment, and the ethical boundaries of automated violence. This article undertakes a critical examination of computational mathematics as a force in military theory, tracing its historical origins, deconstructing its inherent assumptions, weighing competing perspectives on its utility, and projecting the profound implications of its continued ascent for the future of strategic affairs.

Historical Background and Foundational Principles

The marriage of mathematics and warfare is ancient, but its computational consummation is a product of the industrial and digital revolutions. The foundational period for modern computational mathematics in military science was undoubtedly the Second World War, which served as a massive catalyst for applied mathematical research. This era witnessed the emergence of two pillars that would define the field: operations research and cryptography. Operations research utilized mathematical analysis to optimize complex military operations, from anti-submarine warfare campaigns to logistical supply chains, introducing a quantitative rigor to tactical and operational decision-making. Concurrently, the cryptographic war, most famously at Bletchley Park, saw mathematicians like Alan Turing pioneer computational approaches to breaking the German Enigma codes. This effort was not merely about abstract theory; it demanded the creation of physical computational machines like the Bombe and the Colossus, which were among the first steps toward the digital computer. These wartime endeavors demonstrated that complex, adversarial processes could be modeled and manipulated mathematically, yielding intelligence and efficiencies that were previously unimaginable.

In the decades following WWII, the field expanded from solving specific problems to framing entire strategic paradigms. The Cold War solidified the role of systems analysis and game theory in grand strategy, as thinkers like Thomas Schelling applied formal mathematical logic to the diplomacy of violence and nuclear deterrence. The development of large-scale digital computers enabled ever more sophisticated simulations and models, transforming planning processes. This historical trajectory reveals a core set of foundational principles that underpin computational military theory. First is the principle of optimization, the belief that military processes — from targeting to logistics — can be refined through mathematical techniques to maximize effectiveness and minimize cost or risk. Second is the principle of representation, which holds that key aspects of warfare, including terrain, adversary behavior, and command decisions, can be formally abstracted into computable data structures without losing essential meaning. Third is the principle of prediction, the ambition that, with sufficient data and processing power, the outcomes of strategic interactions can be forecasted with useful accuracy, thereby thinning the fog of war. These principles, born from the successes of mid-century applied mathematics, continue to guide the development and application of computational tools in military theory today.

Underlying Assumptions, Inconsistencies, and Biases

The application of computational mathematics to military theory rests upon a set of often-unstated assumptions that, upon critical examination, reveal significant inconsistencies with the chaotic reality of war. The most fundamental of these is the assumption of rationalizability — that strategic behavior can be meaningfully captured by formal models of instrumental rationality. This perspective often reduces strategy to a discrete choice problem, ignoring the complex, iterative, and often ill-structured process by which political communities actually organize and employ violence. Computational models require structure and quantifiable parameters, but strategy often involves intangible elements like morale, political will, and cultural context that resist easy quantification. This leads to a constant danger of what strategic scholars call “just-so stories,” where the model’s clean logic is retroactively imposed on messy historical events, creating a false sense of explanatory rigor.

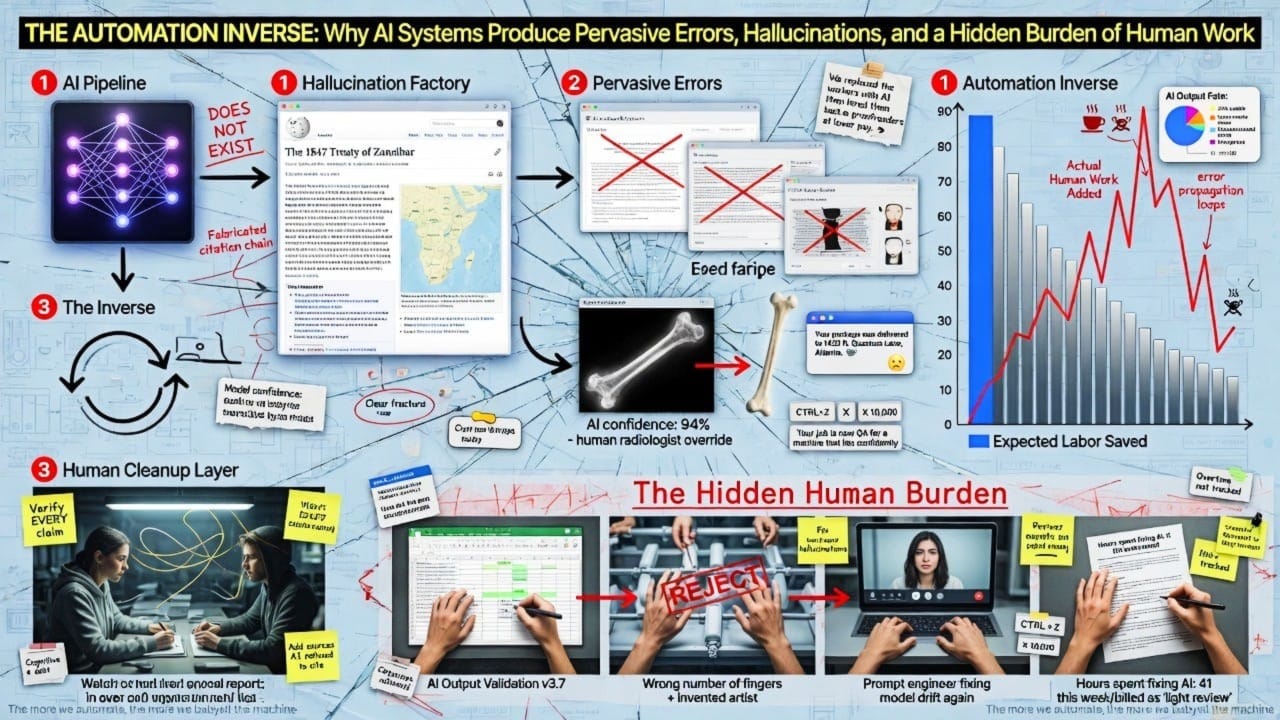

Perhaps the most pressing critique involves the problem of algorithmic bias, which demonstrates that computational systems are not the neutral, objective tools they are often presumed to be. Bias can be introduced at multiple points in a system’s lifecycle. It begins with bias in data, where unrepresentative training data, perhaps drawn from a limited set of conflict zones, leads to models that perform poorly in different contexts. This is compounded by bias in design and development, where the choices made by programmers and engineers — often from homogenous professional backgrounds with their own cultural assumptions — are embedded into the very architecture of the algorithm. Finally, there is bias in use, where human operators, susceptible to “automation bias,” may over-trust the system’s outputs, creating a negative feedback loop that amplifies initial flaws. The consequences are not merely technical; they are legal and moral. A biased targeting system, for example, could wrongfully associate individuals of a particular demographic with combatant status, leading to tragic outcomes. This reveals that the calculative rationality of computational mathematics is a myth; these systems are inherently social and political, reflecting the biases and values of their human creators and the societies from which they emerge.

Competing Perspectives and Counterarguments

Within strategic studies, the rise of computational mathematics has provoked a robust debate, with perspectives ranging from enthusiastic adoption to deep skepticism. Proponents, often from the operations research and systems analysis communities, argue that computational modeling provides a necessary corrective to the imprecision of purely verbal strategic theory. They contend that the formal logic of computation forces theorists to clarify their assumptions, expose hidden inconsistencies, and subject their ideas to a rigor that narrative case studies cannot match. From this viewpoint, models are not meant to be perfect predictors but are valuable as tools for exploration — for bounding outcomes, illuminating core uncertainties, and conducting “what-if” analyses in a risk-free simulated environment. This camp sees computational methods as the only way to manage the overwhelming complexity and data density of modern battlespaces, arguing that human cognition alone is insufficient for integrating the myriad factors involved in high-tempo, multi-domain operations.

The skeptical perspective, often rooted in classical strategic theory and history, challenges the very premise that the essence of war can be captured by computation. Drawing on the Clausewitzian concept of war as a “chameleon,” whose nature is defined by chance, uncertainty, and the clash of opposing wills, these scholars argue that modeling war is a fundamentally different endeavor from modeling physical systems. They point to the poor predictive record of highly quantified approaches in past conflicts, such as the systems analysis applied during the Vietnam War, which failed to deliver victory despite its technical sophistication. The critique is that computational models inevitably flatten the human and political dimensions of conflict, creating a dangerous illusion of control. The true strength of the strategist, from this perspective, lies not in calculation but in judgment — a faculty developed through historical study, practical experience, and an understanding of the psychological and moral forces that drive conflict. This view holds that an over-reliance on models can lead to strategic brittleness, where a force optimized for a simulated war may be dangerously unprepared for the unpredictable reality of one fought among people.

Broader Implications and Future Significance

The ascendancy of computational mathematics is not a mere technical trend; it is reshaping the very ontology of military theory and the institutions that produce it. Its most profound implication is the potential for a fundamental shift in strategic epistemology — how military knowledge is created, validated, and applied. The traditional foundation of strategic knowledge has been historical analysis and the reasoned judgment of experienced practitioners. Computational modeling introduces a new, competing foundation based on simulated data and algorithmic output. This shift privileges different skillsets, potentially altering the professional identity of the strategist from that of a historian-philosopher to that of a data scientist. Furthermore, as the development of advanced artificial intelligence and quantum computing is increasingly driven by corporate giants like Google rather than state-sponsored laboratories, the center of gravity for military innovation may be shifting from the public to the private sector, raising questions about the future of government control over strategic technologies.

The ethical and legal implications are equally vast. The development of lethal autonomous weapon systems (LAWS) powered by complex algorithms forces a re-examination of international humanitarian law principles such as distinction and proportionality. If, as research indicates, algorithmic bias is an inherent and difficult-to-mitigate risk, then delegating life-and-death decisions to such systems poses grave dangers. This creates a pressing need for new norms, technical standards, and potentially binding international regulations to ensure that the application of computational force remains subject to meaningful human judgment and legal accountability. Ultimately, the central theoretical significance of computational mathematics lies in its challenge to the enduring Clausewitzian paradigm. If war can be transformed from a human-centric “duel” into a largely automated process of system optimization, it may fundamentally change its political nature. The task for military theorists in the coming decades will be to integrate the undeniable power of computational methods with a wisdom that recognizes their limits, ensuring that strategy remains a political art informed by, rather than subservient to, a mathematical science.

Real-World Applications Across Domains

The theoretical power of computational mathematics is made tangible through its diverse applications across the modern military enterprise. In the realm of cryptography and cybersecurity, the field’s origins in code-breaking have evolved into a continuous digital arms race. Modern secure communications rely on sophisticated algorithms based on number theory and modular arithmetic, while offensive and defensive cyber operations use computational models to probe for vulnerabilities, detect intrusions, and project power in cyberspace. Ballistics and targeting provide another classic example, where the principles of projectile motion — governed by trigonometric functions and differential equations — are computed in real-time by fire control systems to ensure accuracy for everything from infantry mortars to naval artillery. This application has been refined for decades, representing one of the most mature and unquestionably effective uses of military mathematics.

More recently, computational methods have become central to logistics and force deployment. The immense challenge of moving troops and supplies across the globe is framed as a massive optimization problem, solved using techniques like linear programming to minimize cost and time while maximizing throughput. Perhaps the most expansive application is in training and wargaming. High-fidelity simulations and virtual training environments use computational models to generate realistic physical and behavioral dynamics, allowing units to train collectively in complex, synthetic battlespaces before ever setting foot in a real one. These models allow military leaders to explore the second- and third-order effects of tactical decisions, providing a crucial space for learning and adaptation. Finally, emerging applications in artificial intelligence and autonomous systems represent the frontier of the field. Machine learning algorithms are being developed for tasks ranging from image recognition in surveillance footage to the predictive maintenance of aircraft engines. Each of these applications represents a point where abstract mathematical theory is translated into concrete military capability, demonstrating the deeply practical consequences of the computational turn in strategic affairs.

Conclusion

Computational mathematics has irrevocably altered the landscape of military theory. It offers a powerful language to describe, analyze, and simulate the complexities of conflict, providing tools that can enhance precision, optimize resource allocation, and expand the boundaries of strategic planning. Yet, as this examination has revealed, this power is coupled with profound perils. The assumptions of rationalizability can blind strategists to the inherently political and human nature of war, while the embedded biases of algorithmic systems can perpetuate discrimination and erode accountability. The future of military theory does not lie in a choice between classical wisdom and computational power, but in a synthesis that acknowledges the strengths and limitations of both. The strategist must become bilingual, fluent in the languages of both history and algorithm, of judgment and calculation. The ultimate challenge is to ensure that the calculus of computation remains the servant of a deeper political and ethical logic, lest the very tools designed to secure victory instead lead us into a form of conflict whose speed and automation outstrip our capacity for human control.